Results for ""

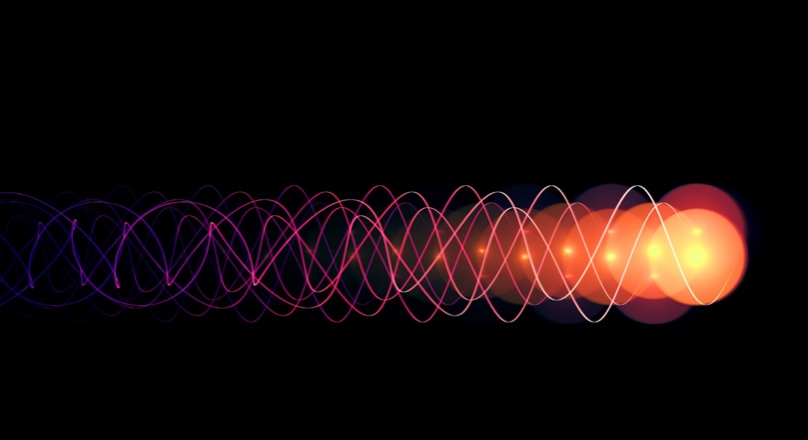

Computer scientists from the University of Toronto have built an advanced camera setup to visualize light in motion from any perspective, opening avenues for further inquiry into new 3D sensing techniques.

The researchers developed a sophisticated AI algorithm that can simulate what an ultra-fast scene—a pulse of light speeding through a pop bottle or bouncing off a mirror—would look like from any vantage point. The work is published on the arXiv preprint server.

David Lindell, an assistant professor in the Department of Computer Science in the Faculty of Arts & Science, says the feat requires the ability to generate videos in which the camera appears to "fly" alongside the photons of light as they travel.

"Our technology can capture and visualize the actual propagation of light with the same dramatic, slowed-down detail," says Lindell. "We glimpse the world at speed-of-light timescales that are normally invisible."

Advanced sensing capabilities

The researchers believe the approach, which was recently presented at the 2024 European Conference on Computer Vision, can unlock new capabilities in several important research areas, including advanced sensing capabilities such as non-line-of-sight imaging, a method that allows viewers to "see" around corners or behind obstacles using multiple bounces of light; imaging through scattering media, such as fog, smoke, biological tissues or turbid water; and 3D reconstruction, where understanding the behaviour of light that scatters multiple times is critical.

In addition to Lindell, the research team included U of T computer science PhD student Anagh Malik, fourth-year engineering science undergraduate Noah Juravsky, Professor Kyros Kutulakos Stanford University Associate Professor Gordon Wetzstein and PhD student Ryan Po. The researchers' key innovation lies in the AI algorithm they developed to visualize ultrafast videos from any viewpoint—a challenge known in computer vision as "novel view synthesis."

Speed of light

Traditionally, novel view synthesis methods are designed for images or videos captured with regular cameras. However, the researchers extended this concept to handle data captured by an ultra-fast camera operating at speeds comparable to light, which posed unique challenges. For example, their algorithm needed to account for the speed of light and model how it propagates through a scene.

Through their work, researchers observed a moving-camera visualization of light in motion, including refracting through water, bouncing off a mirror or scattering off a surface. They also demonstrated how to visualize phenomena that only occur at a significant portion of the speed of light, as predicted by Albert Einstein.

While current algorithms for processing ultra-fast videos typically focus on analyzing a single video from a single viewpoint, the researchers say their work is the first to extend this analysis to multi-view light-in-flight videos, allowing for the study of how light propagates from multiple perspectives.

The research also holds significant potential for improving LIDAR (Light Detection and Ranging) sensor technology used in autonomous vehicles. Typically, these sensors process data to immediately create 3D images right away. But theresearchers' work suggests the potential to store the raw data, including detailed light patterns, to help create systems that perform better than conventional LIDAR to see more details, look through obstacles and understand materials better.